Michal Cichocki, Senior Software Engineer and Head of GCP Discipline at EPAM Anywhere, reviewed and verified these interview questions and answers. Thanks a lot, Michal!

Preparing for a Google Cloud interview can be daunting, given the vast array of services and concepts one needs to understand. This guide aims to help you navigate through this challenge.

We've compiled a list of common Google Cloud interview questions that cover key areas, from basic principles to more advanced concepts. Whether you're a beginner looking to start out in cloud computing or aiming to advance your career, these questions will provide a solid foundation for your preparation for the technical interview. Let's dive in and explore these questions to help you ace your Google Cloud interview.

save your time on job search

Send your CV and we'll match your skills with our top jobs while you get ready for your next Google Cloud engineer interview.

1. Describe Google Cloud Platform and its role on a high level

Google Cloud Platform (GCP) is a collection of cloud computing services by Google. It covers all major areas such as computing, storage, machine learning, and data analytics. The importance of GCP lies in the fact that it provides businesses with flexible, scalable, and cost-effective solutions, enabling them to take advantage of Google's powerful technology infrastructure. This, in turn, allows businesses to focus on their main competencies leaving the technical complexities to Google.

2. Can you explain the difference between Google Cloud Storage and Google Cloud SQL?

Google Cloud Storage is an object storage service for storing and retrieving any data at any time. It is ideal for unstructured data like media files, backups, etc. On the other hand, Google Cloud SQL is a fully-managed relational database service for MySQL, PostgreSQL, and SQL Server. It is ideal for structured data and supports transactions, complex joins, and other SQL features.

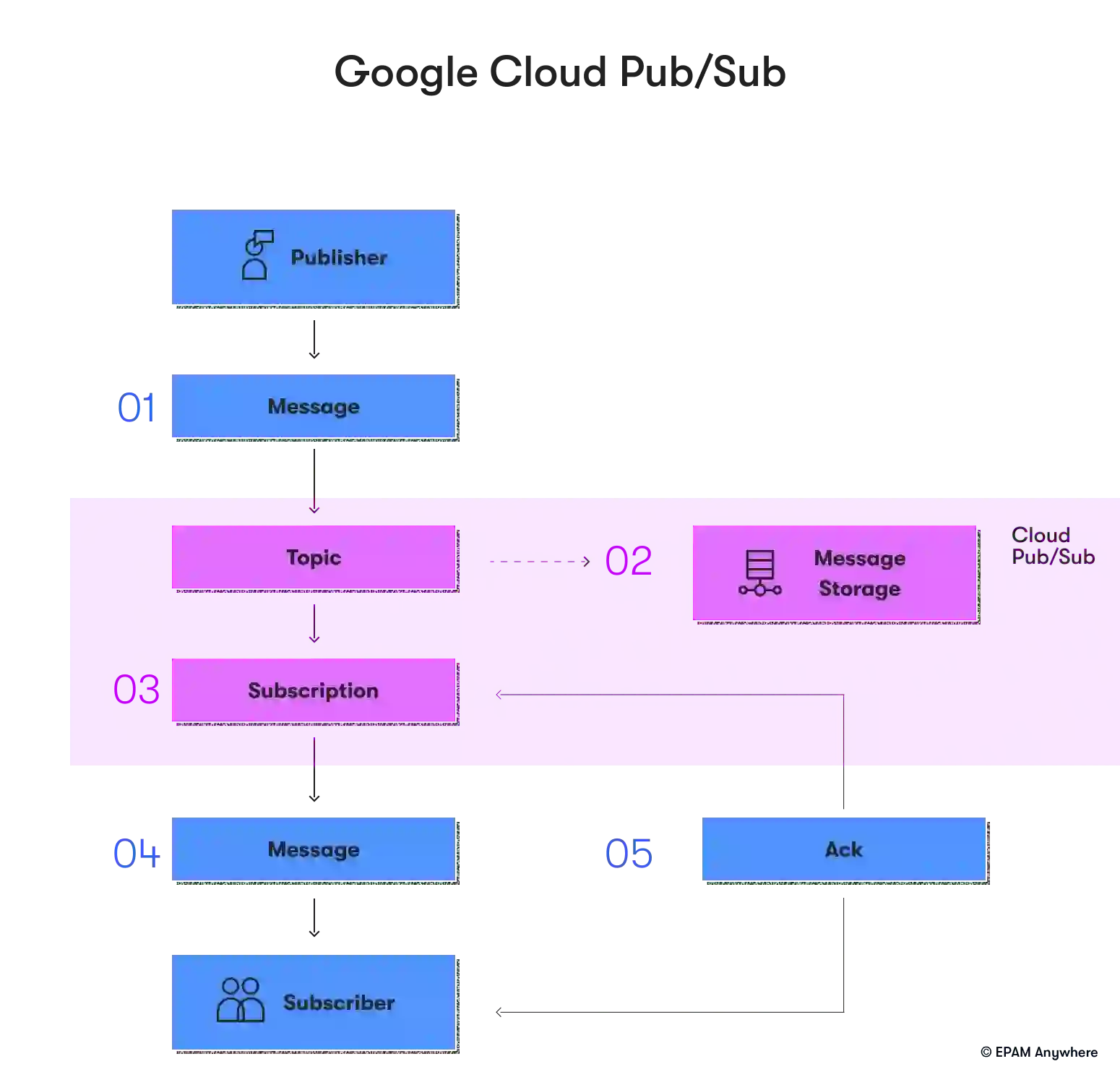

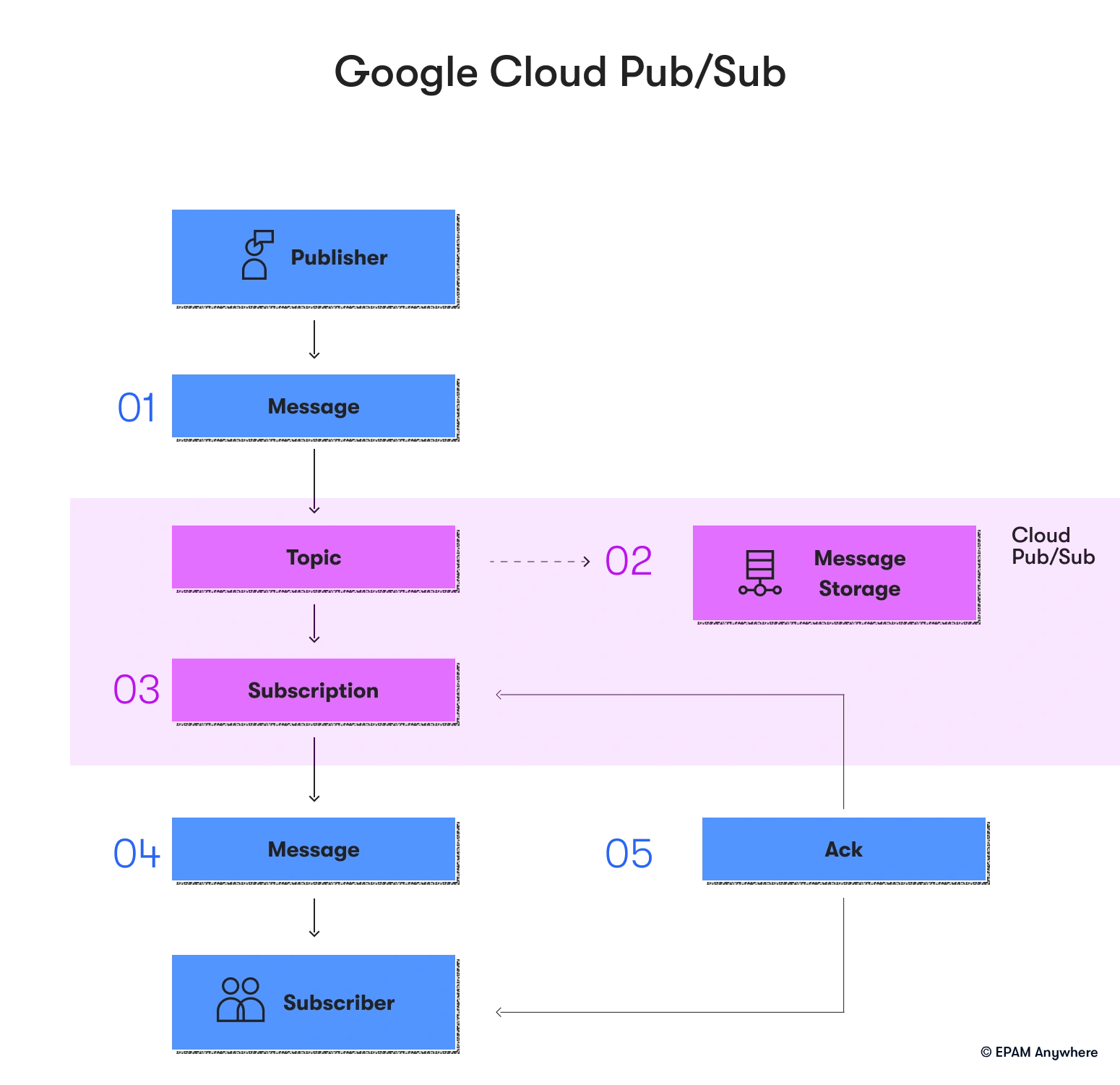

3. What is Google Cloud Pub/Sub, and how does it work?

Google Cloud Pub/Sub is a messaging service created for sending and receiving messages between independent applications. It works on the principle of the publisher-subscriber model. Publishers create and send messages to "topics". Subscribers then receive those messages through a subscription to those topics.

4. Can you describe the role of Google Kubernetes Engine?

Google Kubernetes Engine (GKE) is a managed environment for deploying, scaling, and managing containerized applications. It takes care of the underlying Kubernetes infrastructure, so you can focus on deploying applications, scaling them based on demand, and improving resource utilization.

5. What is Google Cloud Dataflow, and what are its benefits?

Google Cloud Dataflow is an independent and fully managed service for implementing Apache Beam pipelines within the Google Cloud Platform. It provides a simplified, serverless approach for batch and real-time data processing. Its benefits include automatic resource management, dynamic work rebalancing, and creating pipelines using Java or Python SDKs.

6. How do Google Cloud's networking products ensure secure and reliable connectivity?

Google Cloud's networking products are designed to provide secure, high-performance, and reliable connectivity. Here's how they achieve this:

- Google Cloud VPC (Virtual Private Cloud): VPC provides a private network with IP allocation, routing, and network firewall policies to ensure secure connectivity within your cloud environment. It supports both IPv4 and IPv6 for global reach and scalability.

- Cloud Load Balancing: This service automatically distributes traffic across servers to secure high availability and reliability. It also provides cross-region load balancing, allowing your application to stay resilient even if an entire region goes down.

- Cloud Armor: This service works with Cloud Load Balancing to defend against DDoS attacks, thus ensuring secure connectivity.

- Cloud CDN (Content Delivery Network): By caching content close to the users, Cloud CDN ensures fast, reliable content delivery to users worldwide.

- Cloud Interconnect and Cloud VPN: These services provide secure, high-performance connectivity between your cloud resources and your on-premises, hosted, or other cloud environments.

- Cloud DNS: This scalable, reliable DNS service ensures your application is easily accessible from anywhere in the world.

- Private Google Access: This service allows VM instances with internal IP addresses to reach Google APIs and services securely without needing a public IP.

When used together, all these products provide a comprehensive solution for secure and reliable connectivity in Google Cloud.

7. Can you explain the concept of Google Cloud Functions?

Google Cloud Functions is a powerful tool that enables developers to build and connect cloud services in a serverless execution environment. This means you can create simple, single-purpose functions triggered by events emitted from your cloud infrastructure and services. Through Cloud Functions, you can easily automate your cloud workflows and build powerful applications with minimal overhead. Simply attach your function to an event, and it will execute automatically when that event is fired, making it a highly efficient and effective solution for modern cloud development.

8. What is the role of Google Cloud IAM?

Google Cloud Identity and Access Management (IAM) is a powerful tool for administrators to manage and control access to specific resources. With IAM, you can easily authorize or restrict actions on your resources based on user roles and permissions. This comprehensive solution provides a unified view of your organization's security policies and allows for built-in auditing, simplifying compliance. Overall, IAM helps you maintain control and visibility over your organization's resources and enables you to keep them secure and protected.

9. How does Google Cloud AutoML benefit businesses?

Google Cloud AutoML is a powerful tool that enables individuals without machine learning expertise to access the benefits of machine learning. This platform allows businesses to create customized machine learning models with minimal effort, which can be tailored to suit their specific needs. With Google Cloud AutoML, businesses can leverage the advantages of machine learning to improve their operations without having to invest significant amounts of time or resources.

10. Can you describe how Google Cloud Bigtable works?

Google Cloud Bigtable is a scalable, fully managed NoSQL database service. It is designed to collect and retain data from 1TB to hundreds of PB. It offers low latency and high throughput, making it suitable for big data and real-time applications. It integrates seamlessly with popular big data tools like Hadoop and supports the Apache HBase API and the Google Cloud Bigtable API.

GCP data engineer interview questions

These GCP interview questions will help you update your knowledge on the Google platform and ensure that you match the skills listed in cloud engineer job descriptions.

11. Can you explain the difference between BigQuery and Bigtable?

BigQuery is a fully managed, serverless data warehouse that enables super-fast SQL queries using the processing power of Google's infrastructure. It's designed for analyzing large datasets. Bigtable, on the other hand, is a NoSQL big data database service designed for low-latency, large-scale applications and operational analytics.

12. How would you design a data pipeline in Google Cloud Platform?

Designing a data pipeline in Google Cloud Platform (GCP) involves several steps and services. Here's a basic outline:

- Data ingestion: The first step is to ingest data from different sources. This could be from on-premises databases, third-party applications, or other cloud platforms. GCP provides several services for data ingestion, like Cloud Pub/Sub for real-time messaging, Cloud Storage for unstructured data, and Cloud SQL for structured data.

- Data processing: Once the data is ingested, it needs to be processed. This could involve cleaning the data, transforming it into a suitable format, or running computations. You can use Cloud Dataflow, a fully-managed service for stream and batch processing, or Cloud Dataproc, a managed Hadoop and Spark service for big data processing.

- Data storage: After processing, the data usually needs to be stored for further analysis. Depending on your needs, you can use BigQuery, a fully-managed and highly scalable data warehouse, Cloud Bigtable for NoSQL workloads, or Cloud Spanner for relational database needs.

- Data analysis and visualization: The processed data can be analyzed and visualized using tools like Google Data Studio or Looker. You can also use BigQuery ML to create and execute machine learning models on your data.

Designing a data pipeline in GCP should also involve security, reliability, and cost considerations. You should use Cloud IAM for access control, ensure your data is backed up for reliability, and monitor your usage to control costs.

13. What is Google Cloud Dataflow? What are its benefits?

Google Cloud Dataflow is a fully managed service used for Apache Beam pipeline execution within the Google Cloud Platform. It provides a simplified, serverless approach for batch and real-time data processing. Its benefits include automatic resource management, dynamic work rebalancing, and creating pipelines using Java or Python SDKs.

14. How would you handle large datasets in Google Cloud Platform?

Google Cloud Platform offers several services to handle large datasets. You can use Cloud Storage to store large amounts of data, BigQuery to analyze large datasets, and Bigtable to handle large-scale operational analytics. For processing large datasets, you can use Cloud Dataflow or Cloud Dataproc.

15. What is Google Cloud Dataproc, and how does it work?

Google Cloud Dataproc is a managed service that runs Apache Hadoop and Spark jobs. It simplifies the creation, configuration, and management of Hadoop clusters, reducing the time required to start a job. It also supports the most common Hadoop ecosystem tools, allowing you to use existing skills and code.

16. How does Google Cloud Datalab help in data exploration and visualization?

Google Cloud Datalab is a tool for exploring, transforming, analyzing, and visualizing data on the Google Cloud Platform. It provides a Jupyter notebook-based environment with support for multiple programming languages, built-in machine learning APIs, and easy integration with BigQuery.

17. Can you explain the role of Google Cloud IAM in data security?

Google Cloud Identity and Access Management (IAM) is a tool that helps administrators to manage and control access to resources within an organization. With IAM, you can easily authorize the access and allowed actions. This ensures that only authorized personnel can access sensitive data and perform necessary actions, making data security a top priority.

IAM provides a unified view of security policy across your organization, making it easy to manage and monitor security policies. This helps ensure that all users within an organization adhere to the same security policies and procedures.

Furthermore, IAM also comes with built-in auditing capabilities, making monitoring and tracking user activities easier. This auditing feature helps simplify compliance processes, ensuring following regulatory standards.

Overall, Google Cloud Identity and Access Management is an essential tool for any organization that values data security and wants to ensure that only authorized personnel can access sensitive resources.

18. How would you ensure data reliability and consistency in the Google Cloud Platform?

Data reliability and consistency in Google Cloud Platform can be ensured by using services like Cloud SQL and Cloud Spanner, which provide fully managed relational databases with high availability and global transaction consistency. Cloud Storage for data backup and disaster recovery can also help ensure data reliability.

19. What is the role of a data engineer in Google Cloud Platform?

A data engineer in Google Cloud Platform is responsible for designing, building, maintaining, and optimizing data processing systems and infrastructure. Their role involves managing and organizing data while also ensuring its quality, reliability, and accessibility.

They work with various Google Cloud services, such as BigQuery for data warehousing, Cloud Dataflow for data processing, and Cloud Pub/Sub for real-time messaging. They also use services like Cloud Storage and SQL based on specific data storage and database requirements.

Data engineers play a crucial role in making data usable for data scientists and analysts by creating data pipelines, implementing data transformations, and ensuring data consistency. They also focus on data security and compliance, using tools like Google Cloud IAM for access management and Cloud Data Loss Prevention (DLP) for sensitive data protection.

A data engineer on Google Cloud Platform enables an organization to make the best use of its data by ensuring it is properly stored, processed, and available when needed.

Google Cloud architect interview questions

Interviewers usually ask some of these technical questions to see how your background matches the skills and capabilities listed on your cloud engineer resume. You can use these GCP questions to quickly update your knowledge of cloud engineering obtained while preparing for your cloud certification exam.

20. What is the role of a Google Cloud Architect?

A Google Cloud Architect designs, develops, and manages robust, secure, scalable, highly available, and dynamic solutions to drive business objectives. They are responsible for overseeing the cloud computing strategy of an organization, including cloud adoption plans, cloud application design, and cloud management and monitoring.

21. Can you explain the difference between Infrastructure as a Service (IaaS), Platform as a Service (PaaS), and Software as a Service (SaaS)?

- Infrastructure as a Service (IaaS): This is the most flexible category of cloud services. It aims to automate and manage network routing, IP addresses, security, and data storage tasks. With IaaS, businesses can purchase resources on-demand and as-needed instead of buying hardware outright. Examples include Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform.

- Platform as a Service (PaaS): This category is designed to give developers the tools to build and host web applications. PaaS providers host the hardware and software on their infrastructure, freeing developers from installing in-house hardware and software to develop or run a new application. Examples include Heroku, Google App Engine, and Red Hat's OpenShift.

- Software as a Service (SaaS): This method delivers software applications over the Internet, on-demand, and typically on a subscription basis. With SaaS, cloud providers manage the software application and underlying infrastructure and handle any maintenance, like software upgrades and security patching. Users connect to the application over the Internet, usually with a web browser on their phone, tablet, or PC. Examples include Google Apps, Salesforce, and Microsoft Office 365.

22. How would you design a multi-region deployment in Google Cloud Platform?

Designing a multi-region deployment in GCP involves several steps. First, you need to choose the regions based on your business needs. Then, you can use services like Cloud Load Balancing and Cloud Storage for multi-region deployment. You also need to consider data replication and latency issues.

23. Can you explain the role of Google Cloud IAM in managing access to resources?

Google Cloud Identity and Access Management (IAM) helps administrators decide who can take action on specific resources. It provides a unified view of security policy across your organization, with built-in auditing to ease compliance processes.

24. How would you ensure data security in Google Cloud Platform?

Data security in the Google Cloud Platform can be ensured using several tools and practices. These include using IAM for access control, using Cloud Security Scanner to identify security vulnerabilities, data encryption in transit and at rest, and using Cloud Audit Logs to maintain audit trails.

25. What is Google Cloud Anthos, and what are its benefits?

Google Cloud Anthos is a hybrid and multi-cloud platform that allows you to run your applications anywhere securely and consistently. It provides a single-managed service for consistent deployments across different environments. Its benefits include increased operational efficiency, improved security, and greater application portability.

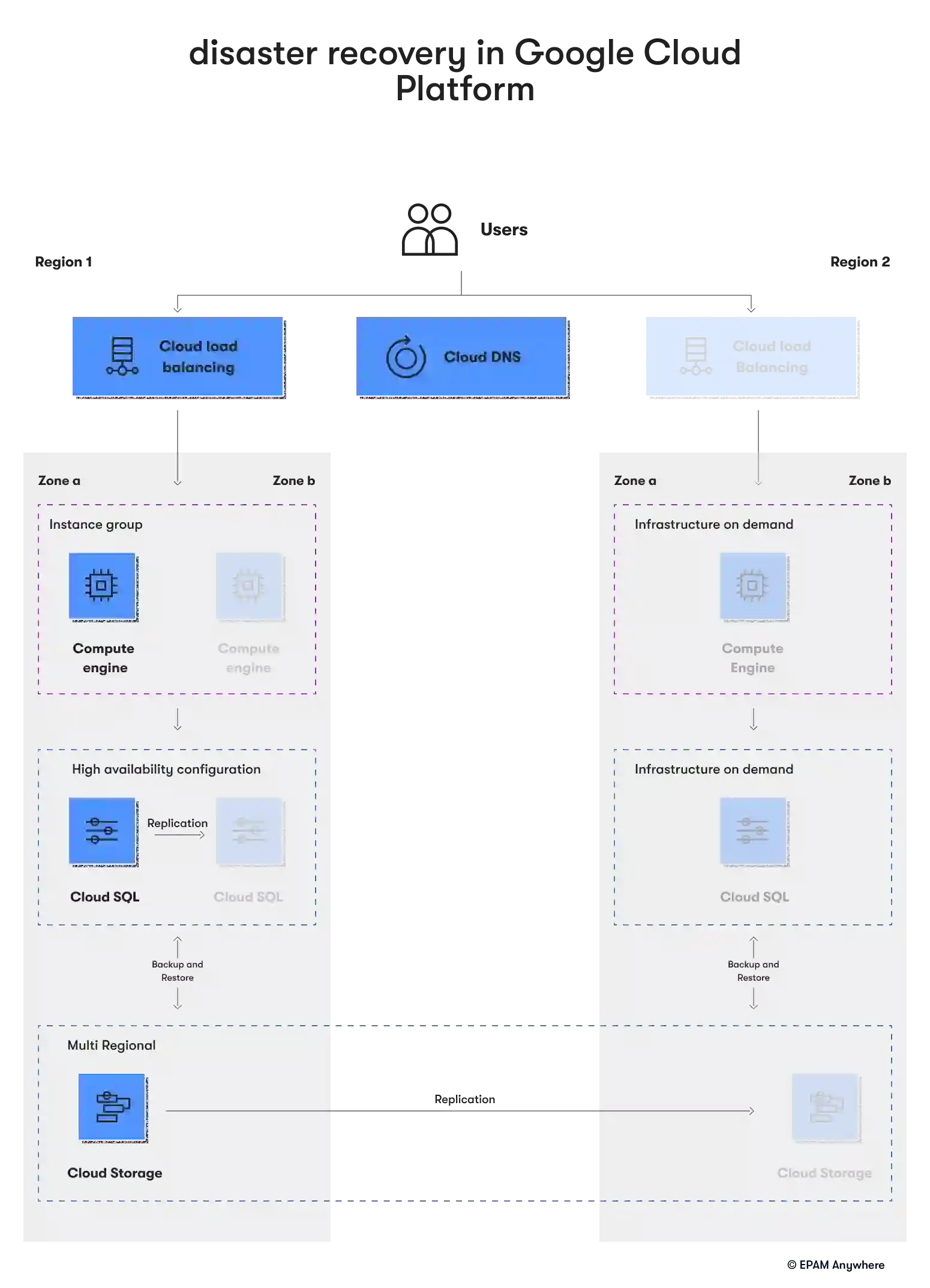

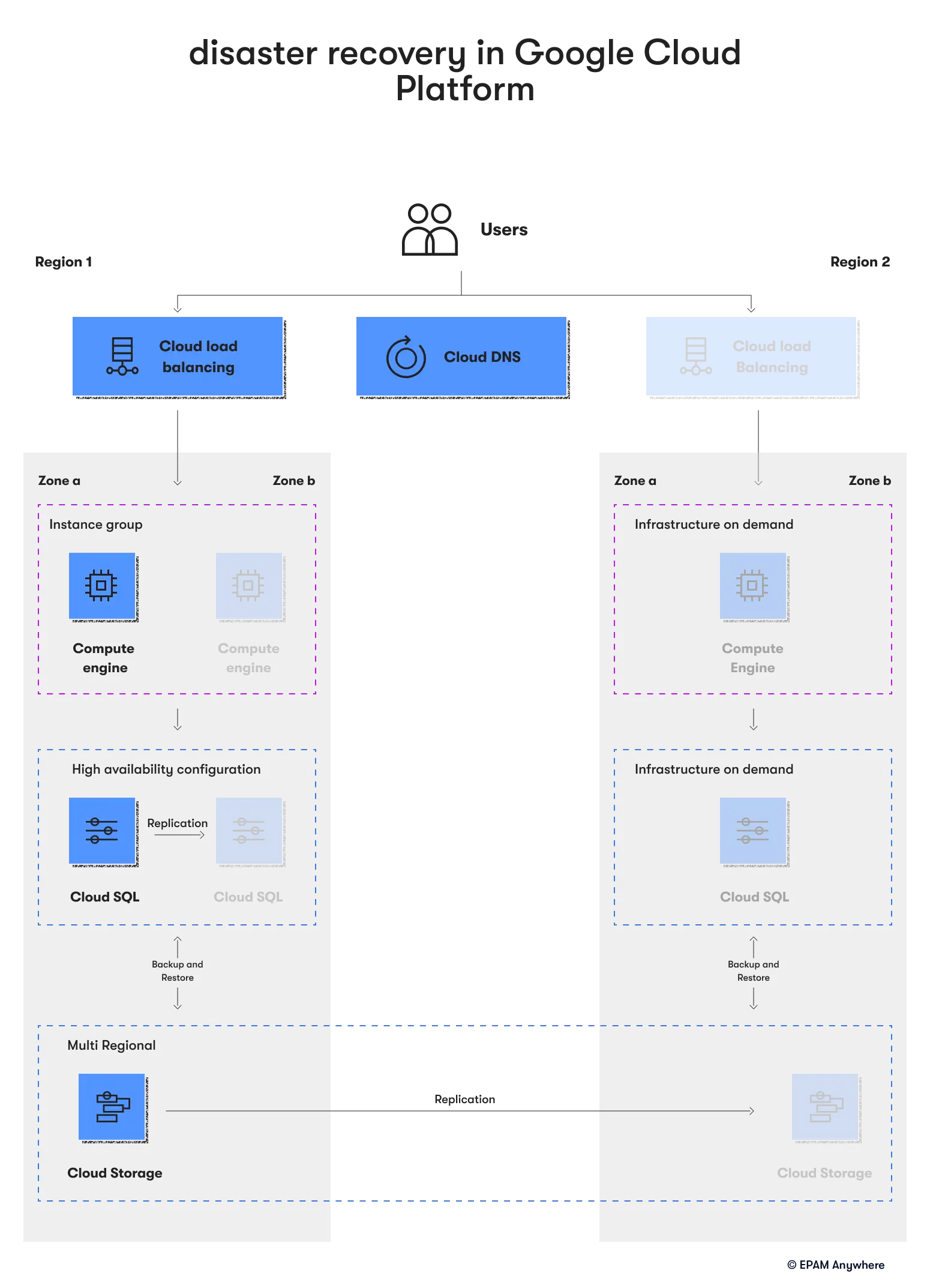

26. How would you handle disaster recovery in Google Cloud Platform?

Disaster recovery in Google Cloud Platform can be handled by using services like Cloud Storage for data backup, Cloud SQL for database replication, and Compute Engine for creating and managing virtual machines. You can also use Cloud DNS for DNS management and Cloud Load Balancing for distributing user traffic.

27. How does Google Cloud AutoML benefit businesses?

Google Cloud AutoML offers several benefits to businesses:

- Simplified machine learning: AutoML allows businesses to leverage machine learning models without requiring machine learning or coding expertise.

- Custom models: Businesses can create machine learning models based on their needs. This can help in improving the accuracy of predictions.

- Scalability: AutoML is built on Google Cloud, which means it can easily scale as the business grows. It can handle large datasets and high demand without any additional infrastructure investment.

- Integration: AutoML can be easily integrated with other Google Cloud services, helping provide seamless workflow.

- Speed: AutoML significantly reduces the time it takes to create and deploy machine learning models. This can help make data-driven decisions quickly.

- Cost-effective: With AutoML, businesses only pay for what they use. This can make machine learning more affordable, especially for small and medium-sized businesses.

28. What is the role of Google Kubernetes Engine in application deployment?

Google Kubernetes Engine (GKE) is a managed environment for deploying, scaling, and managing containerized applications. It takes care of the underlying Kubernetes infrastructure, so you can focus on deploying applications, scaling them based on demand, and improving resource utilization.

Conclusion

In conclusion, these Google Cloud interview questions are curated to help you gain a solid understanding of the platform and its various services. Whether you're a beginner or an experienced professional, these Google Cloud engineer questions can help you prepare for your upcoming interview and showcase your expertise in Google Cloud technologies.

If you're a Google Cloud engineer seeking new opportunities, consider joining EPAM Anywhere. We offer a wide range of remote Google Cloud engineer jobs, allowing you to work on challenging and exciting projects from your home. At EPAM Anywhere, we believe in providing our engineers with the flexibility to work remotely while also offering them the chance to grow and develop their skills.

Our platform connects talented professionals with top-tier projects seeking expertise in Google Cloud and other technologies. As a part of our team, you can work on projects aligned with your career goals and interests. So, if you're ready to take your career to the next level, join us at EPAM Anywhere and become a part of our global community of talented remote professionals.

With a focus on remote lifestyle and career development, Gayane shares practical insight and career advice that informs and empowers tech talent to thrive in the world of remote work.

With a focus on remote lifestyle and career development, Gayane shares practical insight and career advice that informs and empowers tech talent to thrive in the world of remote work.

Explore our Editorial Policy to learn more about our standards for content creation.

read more